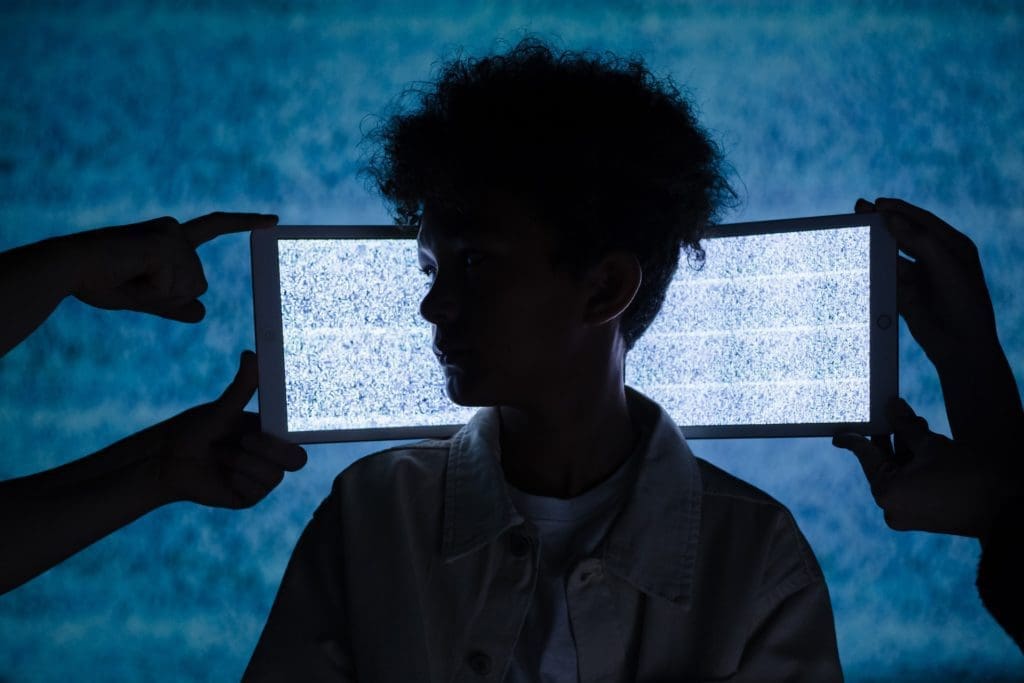

Behind every image, video or screen, a real child is being sexually abused

The rapid expansion of digital technology and increased access to the Internet have transformed the lives of children and young people worldwide in both positive and negative ways. Technology offers remarkable possibilities to people everywhere.

It can take just three clicks to discover child sexual abuse content on the internet.

At the same time, it’s getting easier and easier to access child sexual abuse material (CSAM) online and its volume is increasing to the point that we are facing a ‘tsunami’ of child sexual abuse material online.

To address this fast-growing threat, it is important to understand its meaning and scope.

One way to look at the different types of child sexual abuse material is how a person interacts with such material, depending on whether they produce, search for/view or share/store abusive material.

United Nations Office on Drugs and Crime, 2015

Repeat sharing “serves to re-victimise and thus further exacerbate the psychological damage to the abused”

Producing child sexual abuse material

In 2020, an AI ‘bot’ operating on Telegram generated 100,000 ‘deepfakes’ of real women and girls involved in sexual acts.

Creating child sexual abuse material by taking a photo/video/audio recording, or producing textual content or non-photographic visual material. This category also includes manipulating existing child sexual abuse material to create new unique imagery.

Searching for and/or viewing child sexual abuse material

In 2020, in just one month, 8.8 million attempts to retrieve child sexual abuse material were tracked by three of the IWF’s member organisations.

Seeking child sexual abuse material on the internet and viewing or attempting to view it.

Sharing and/or storing child sexual abuse material

Meta shared that more than 90% of its reports to NCMEC between October and November 2020 concerned shares or reshares of previously detected content.

Downloading, storing, hosting, uploading and/or sharing child sexual abuse material.

You can find more detailed information about the issue of child sexual abuse material and child sexual abuse online, more widely, in our latest Global Threat Assessment.